In the context of conversational AI, instant learning refers to a cognitive function we are too familiar with: learning instantly from conversations.

If someone tells you “beware of the dog when you enter the yard“, your brain will process it immediately, and you will absorb that knowledge. Once learned, you may warn another person saying “be careful, there is a dog in the yard.” Why is it so difficult to teach a computer to do the same? Actually, instant learning technology is already here as explained below.

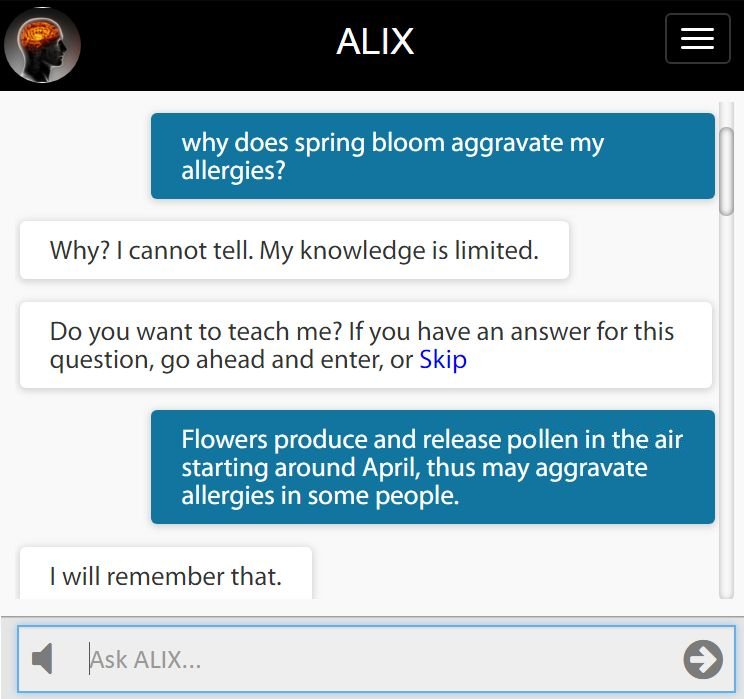

Instant Learning Example

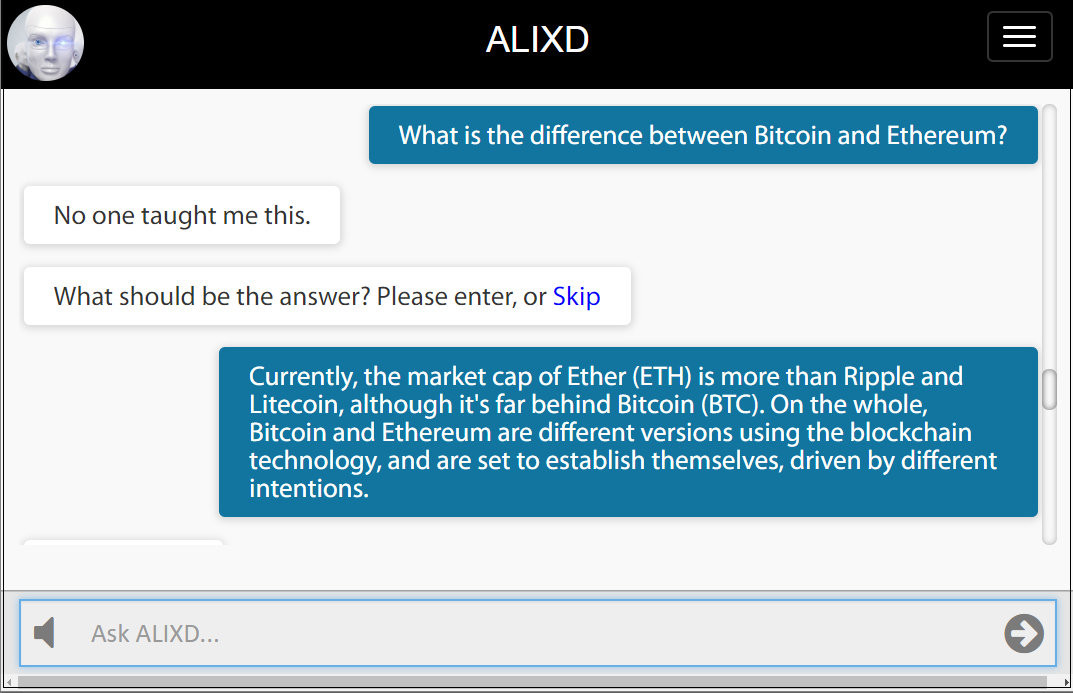

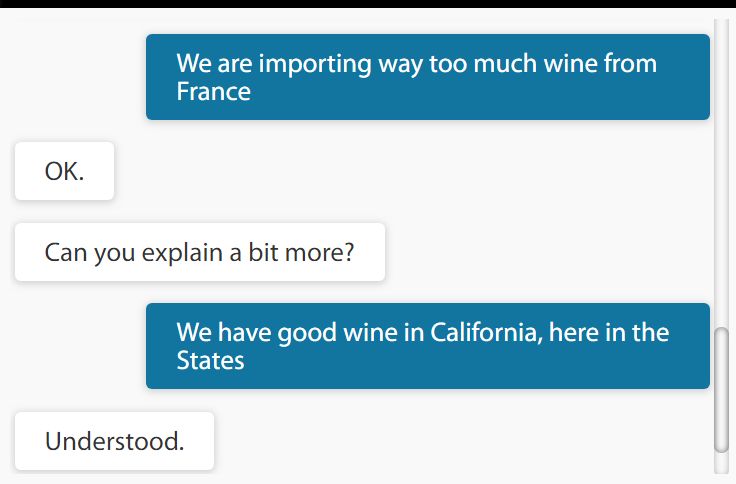

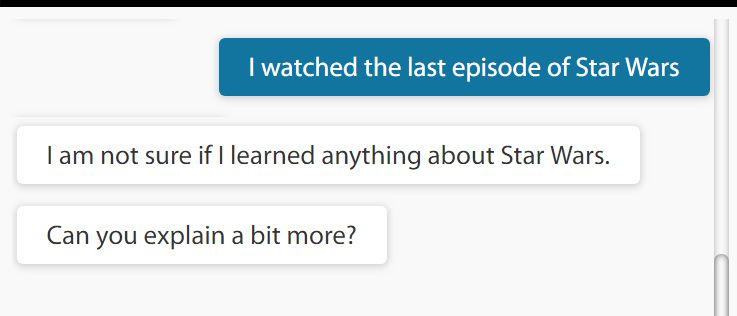

ALIXD is a conversational system that switches to learning mode by entering a password anytime during conversation (see the bottom of the article for testing ALIXD) As shown below, ALIXD learns new knowledge about Bitcoin and Ethereum from its human teacher.

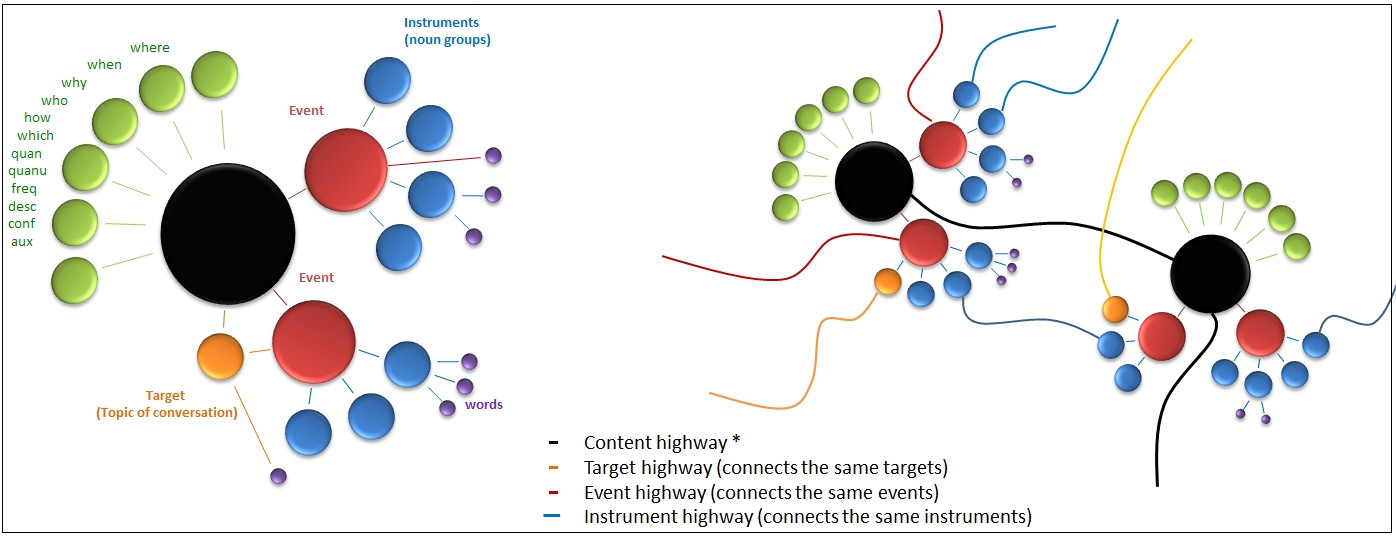

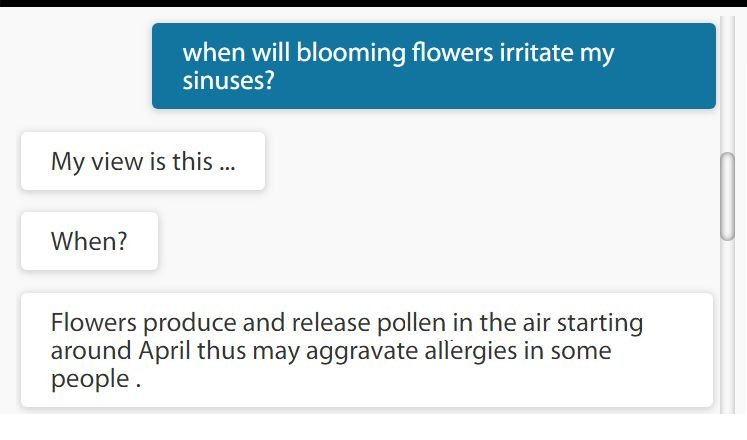

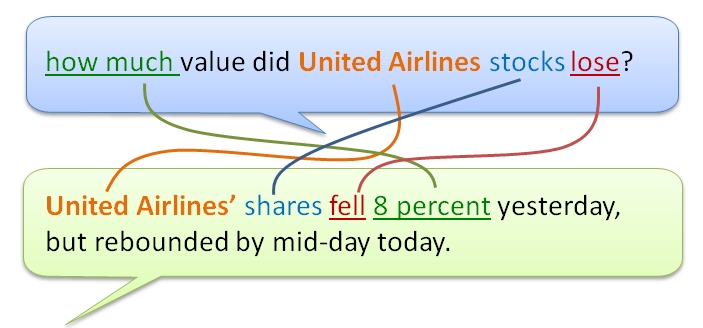

The important point here is that the system will bring this answer to approximately 960 different semantic variations of a relevant question, which is computed by a simple equation using ontological parameters: V = T x N x E x I where V is the variations, T is the question type (typically 20), N is the number of onomasticon (6), E is the number of events (2), and I is the number of instruments (4). Both N, E and I include words in the answer as well as the question entered by the teacher.

Being able to bring an answer to hundreds of different meaningful variations of a single question is highly similar to the human brain’s cognitive skill in instant learning during conversations.

Two examples (out of possible 960) are shown below. If there is no other knowledge entered into the system, these variations are easy to track. If some new and relevant knowledge is added, then the system will pick an answer that is semantically best match to the embedded meaning.

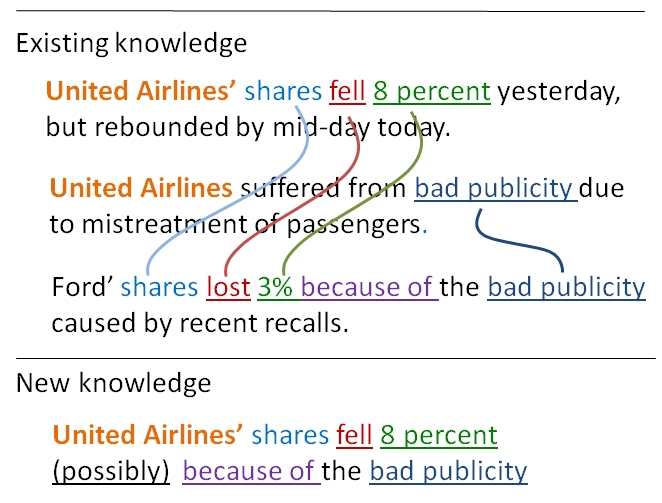

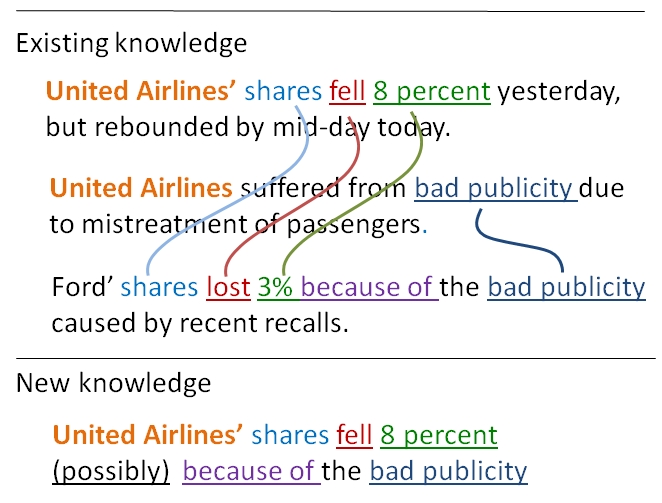

Mechanics of Instant Learning

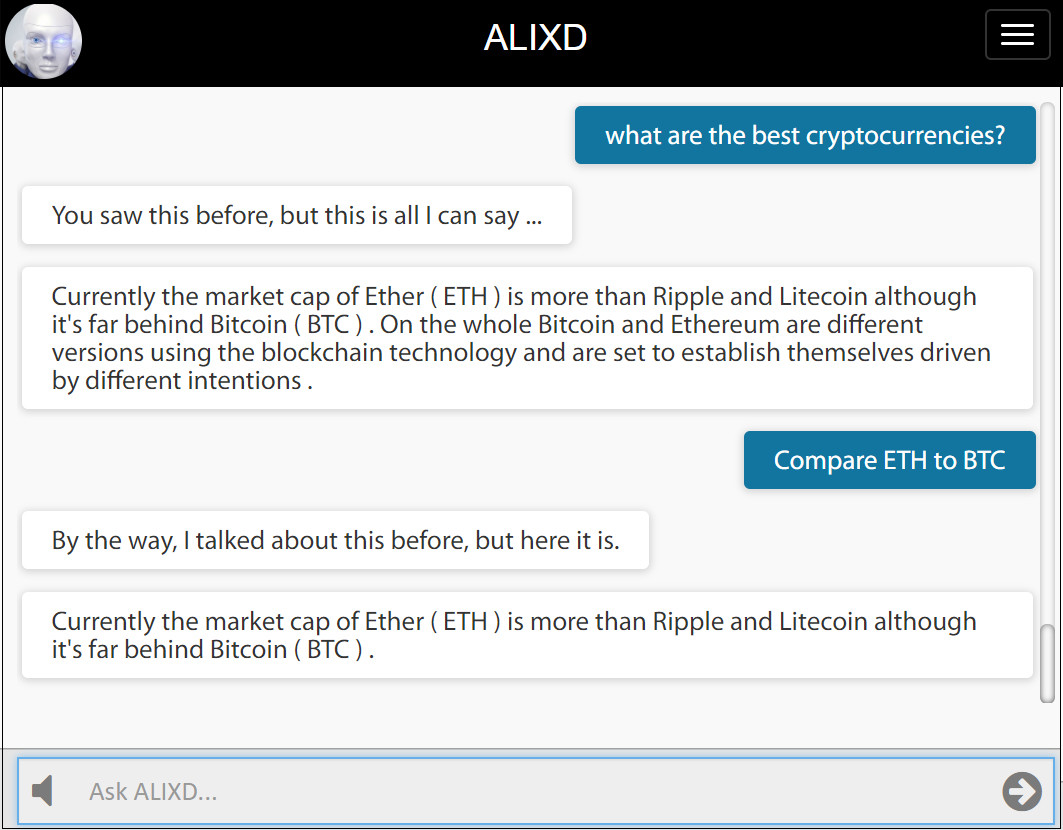

The departure point of instant learning is to represent knowledge by a group of linguistic neurons as shown below (left). When another knowledge is entered, a new group of linguistic neurons appear and they connect (right) based on ontological properties. If a property is identical (such as the same event), then neurons fuse into one. As more knowledge added to the system, a vast network of ontological relationships emerges. Entire documents can be learned in a single step of fusion process. This approach allows answering questions with great ease and deducting new knowledge by logic resolution. The inner workings of this method is proprietary.

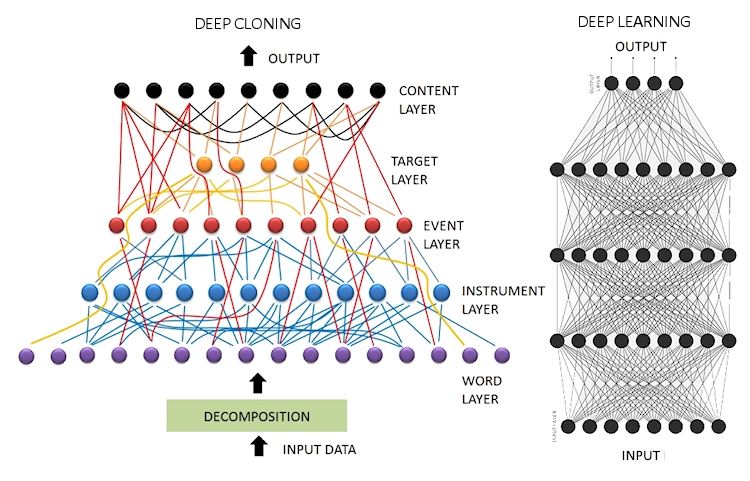

Compared to Deep Learning (DL)

There is nothing “instant” about deep learning as the name implies. In short, the DL approach to conversational AI goes against our natural life experiences. For example, the instant learning example shown above cannot be replicated by DL.

First of all, DL method requires a vast amount of data (more than a Q&A pair) to climb the ladder of language proficiency. Then, a training process and convergence are needed. After a time consuming process, a DL network can be claimed to function as intended, however any new addition of knowledge would require expanding the training data set, and re-training the network.

While current DL methods are producing impressive results in image processing and kinematics, there are serious problems in application to conversational AI mainly caused by the uniformity of neurons (no neurons with linguistic role), limitation of vector space modulation, and statistical bias. More explanations can be found in my previous articles listed below.

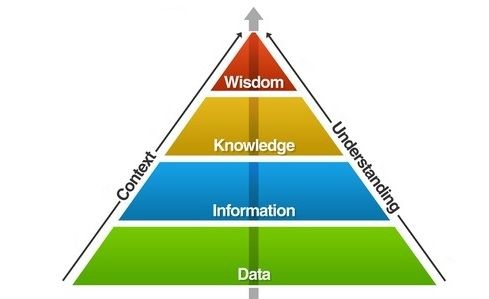

Instant learning comes from knowledge science whereas deep learning is rooted to data science. Considering the pyramid of hierarchy, knowledge science works from top to bottom whereas data science works from bottom to top. Going from bottom to top in this hierarchy suits well for image processing, for example, yet it becomes impractical and misfit for natural language processing at least for the current approaches of deep learning.

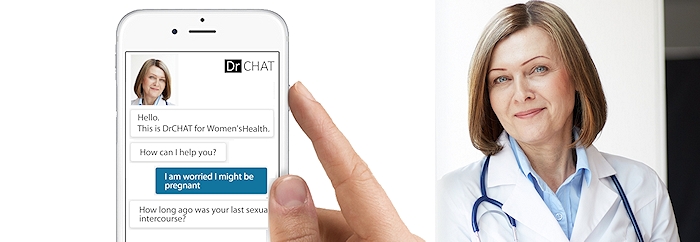

Instant Learning API

Instant learning enables conversational systems (chatbots) to continue learning after they are deployed. This allows organic growth of knowledge by designated teachers, or sometimes by the end users. ALIXD API can be integrated into any conversational system, and will be available soon. Interested parties can contact me for notification.

Test ALIXD

You can test it at this link by entering temporary password 0014. Note that if other people are adding knowledge about the same subject, you may find the system more versatile.

__________

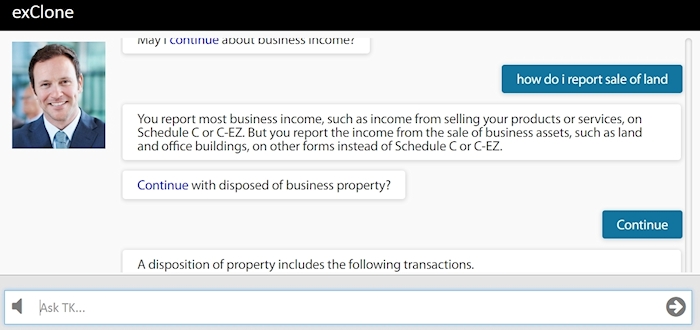

This article is brought to you by exClone, a chatbot technology provider.

Join CHATBOTS group in linkedIn.

You can follow exClone in Facebook, and in LinkedIn.

#instantlearning #deeplearning #chatbots #conversationalAI #AI #ArtificialIntelligence #ML #DL #Machinelearning #exclone #virtualexperts #NLP #humandialoguetheory

Is Google Hyping it? Why Deep Learning cannot be Applied to Natural Languages Easily (41,655 views)

How does IBM Watson Compare to Google’s Hype of Deep Learning for NLP?(10,843 views)

Why Deep Learning is Not a Good Fit For Chatbots: Combinatory Explosion Problem(4,465 views)

Why Deep Learning and NLP Don’t Get Along Well? (6,596 views)

Can Machine Learning Use Knowledge instead of Data? Deep Cloning vs Deep Learning (5,823 views)

Deep Cloning vs Deep Learning (3,672 views)

Most Chatbots Don’t Use AI, are Misrepresenting AI (2,695 views)